Gemma 4 E4B vs the Gemma Family: Enterprise Benchmark Showdown

Google's newest 4B model tested across 8 enterprise task suites against Gemma 2 2B, Gemma 3 4B, and Gemma 3 12B. Run locally on Apple Silicon.

Scope & limitations — read first

4 Gemma models (2B, 4B, E4B, 12B) · 8 enterprise test suites · ~50 test cases · Apple Silicon (MPS) · temperature 0.0 · deterministic runs · local inference via Hugging Face Transformers

Google released Gemma 4 E4B in early 2026 — a 4-billion parameter model positioned as a strong efficiency play for on-device and edge deployment. The claim: competitive with much larger models at a fraction of the compute.

Claims are easy. Benchmarks are harder. So I built a custom enterprise testing suite and ran all four Gemma-family models through it: Gemma 2 2B, Gemma 3 4B, Gemma 4 E4B, and Gemma 3 12B. Every test ran locally on Apple Silicon (MPS), temperature 0.0, deterministic. No API calls, no cloud inference.

The test suites

Eight enterprise-relevant task suites, each designed to probe a capability that matters in production:

- Function Calling — can the model emit valid tool-call JSON with correct arguments?

- Information Extraction — NER and relation extraction from unstructured text

- Classification — intent routing and multi-label classification

- Summarization — faithfulness and hallucination-free condensation

- RAG Grounding — answering from provided context without fabrication

- Code Generation — producing correct, runnable code from natural language specs

- Multilingual — quality across non-English languages

- Multi-turn — maintaining coherence across conversation turns

Overall results: E4B takes the crown

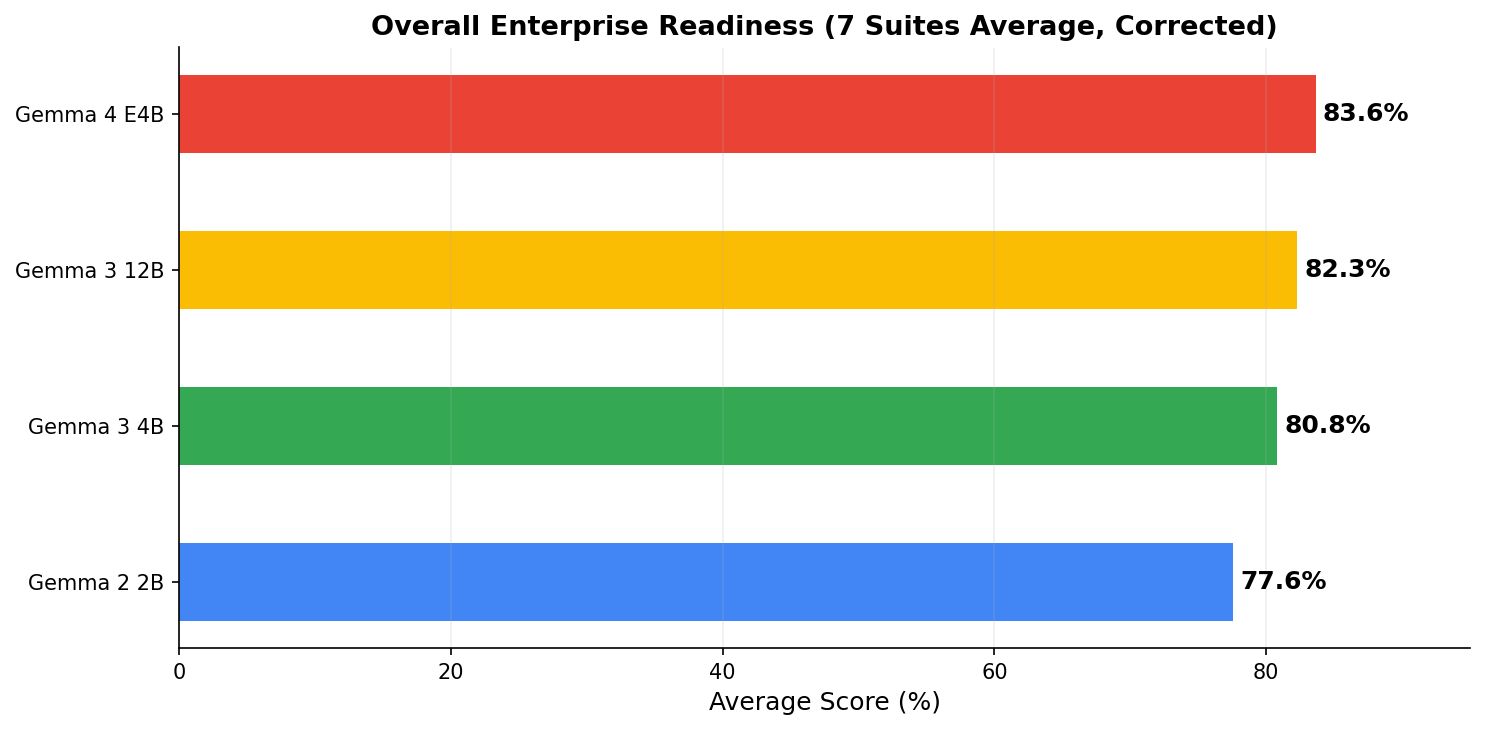

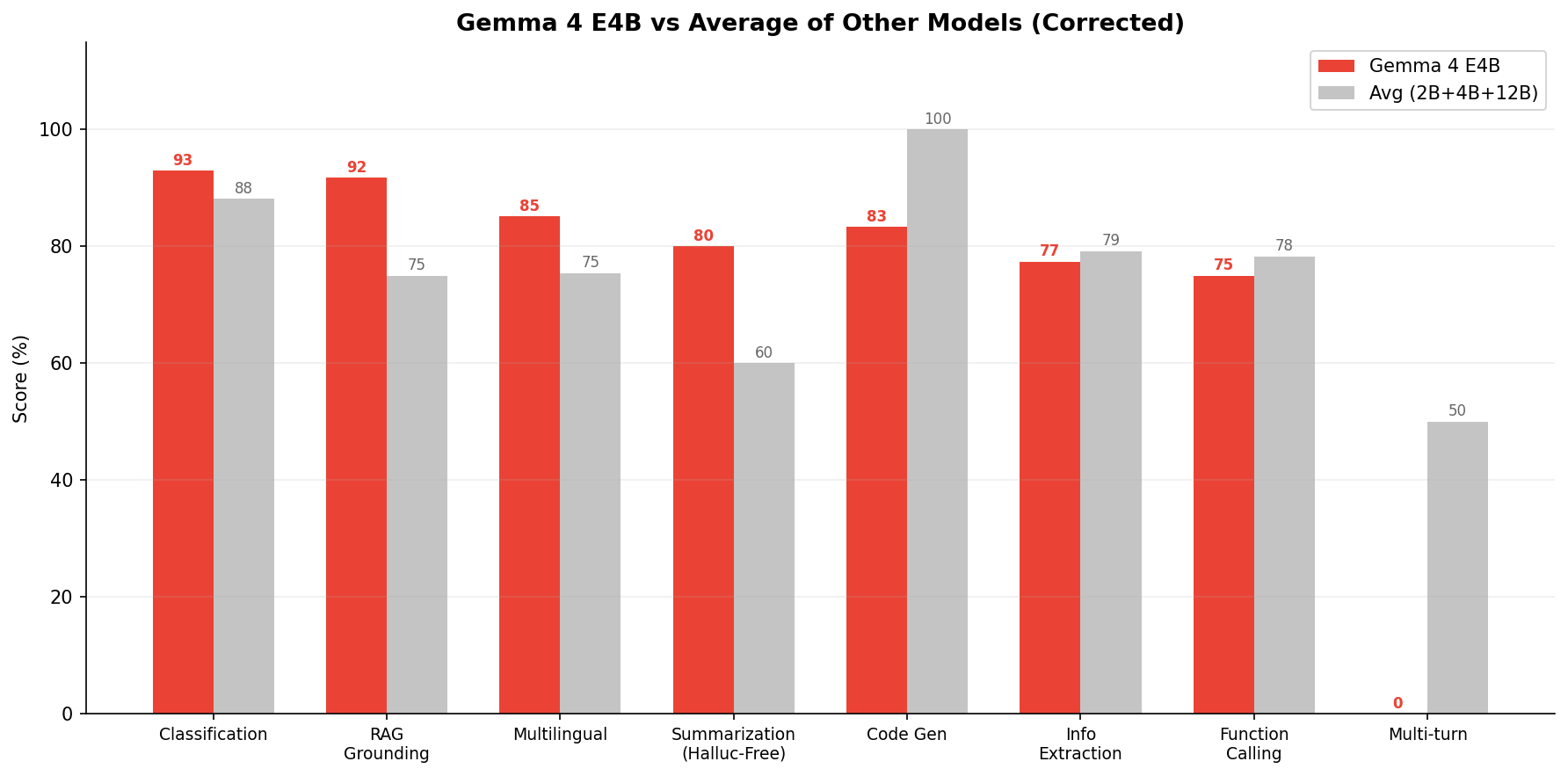

The full ranking: Gemma 4 E4B (83.6%) > Gemma 3 12B (82.3%) > Gemma 3 4B (80.8%) > Gemma 2 2B (77.6%). Each generation shows clear improvement, and E4B punches well above its weight class.

Suite-by-suite breakdown

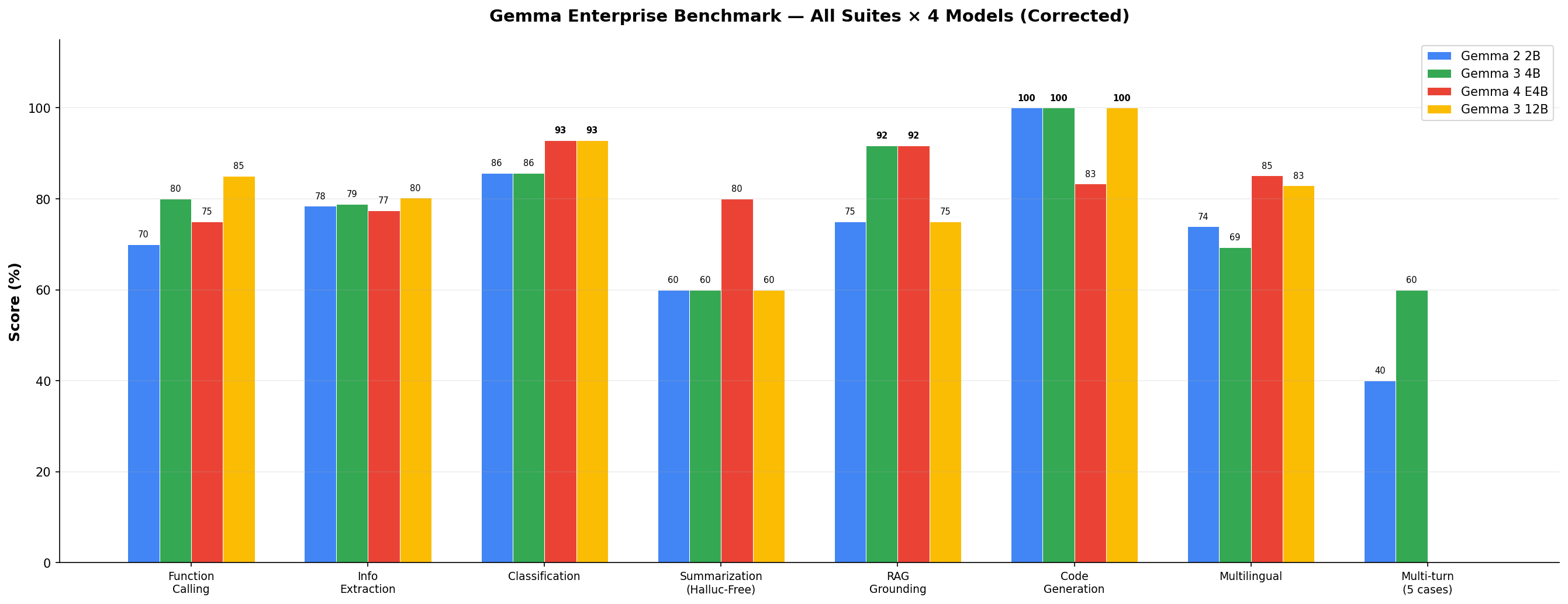

| Suite | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 3 12B |

|---|---|---|---|---|

| Function Calling | 70% | 80% | 75% | 85% |

| Info Extraction | 78.4% | 78.9% | 77.4% | 80.2% |

| Classification | 85.7% | 85.7% | 92.9% | 92.9% |

| Summarization (Halluc-Free) | 60% | 60% | 80% | 60% |

| RAG Grounding | 75% | 91.7% | 91.7% | 75% |

| Code Generation | 100% | 100% | 83.3% | 100% |

| Multilingual | 73.9% | 69.4% | 85.1% | 82.9% |

Raw scores across all 7 suites (multi-turn excluded). Bold = best or tied-best in row.

The bar chart tells the story clearly. Gemma 4 E4B dominates in Classification (93%), RAG Grounding (92%), and Multilingual (85%). It's competitive in Code Generation (83%) and Summarization (80%). Its weakest area is Function Calling (75%) — still respectable, but behind the 12B model.

Task-type breakdown: simple vs multi-step

The overall score hides an important pattern. Simple tasks are basically solved at 2B+, but multi-step reasoning is where small models fall apart.

Simple tasks — small models do fine

| Task Type | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 3 12B |

|---|---|---|---|---|

| Sentiment Analysis | 100% | 87.5% | 100% | 100% |

| Toxicity Detection | 75% | 100% | 75% | 75% |

| Single-tool Calling | ~70% | ~80% | ~75% | ~85% |

| Bug Detection | 100% | 100% | 100% | 100% |

Classification and routing tasks are within ~7 points across all models. Basically solved at 2B+.

Multi-step / reasoning — where small models struggle

| Task Type | Gemma 2 2B | Gemma 3 4B | Gemma 4 E4B | Gemma 3 12B |

|---|---|---|---|---|

| Multi-turn (5+ turns) | 40% | 60% | 0% | N/A |

| Multi-step Tool Chains | FAIL | FAIL | FAIL | FAIL |

| Summarization Faithfulness | 10.3% | 7.5% | 5.3% | 11.4% |

Multi-step tool chains failed across every model — not a size issue. Summarization faithfulness under 12% for all models is likely a scoring methodology issue.

What the overall score hides: Classification and routing are within ~7 points across all models (85–93%) — basically solved at 2B+. Multi-step tool chains (chained function calls) failed across every model regardless of size. Summarization faithfulness scored under 12% for all models including the 12B — this is likely a strict token-overlap scoring issue rather than actual hallucination rates. E4B's 0% multi-turn is the hidden weakness: deploy it as a specialist, not a general assistant.

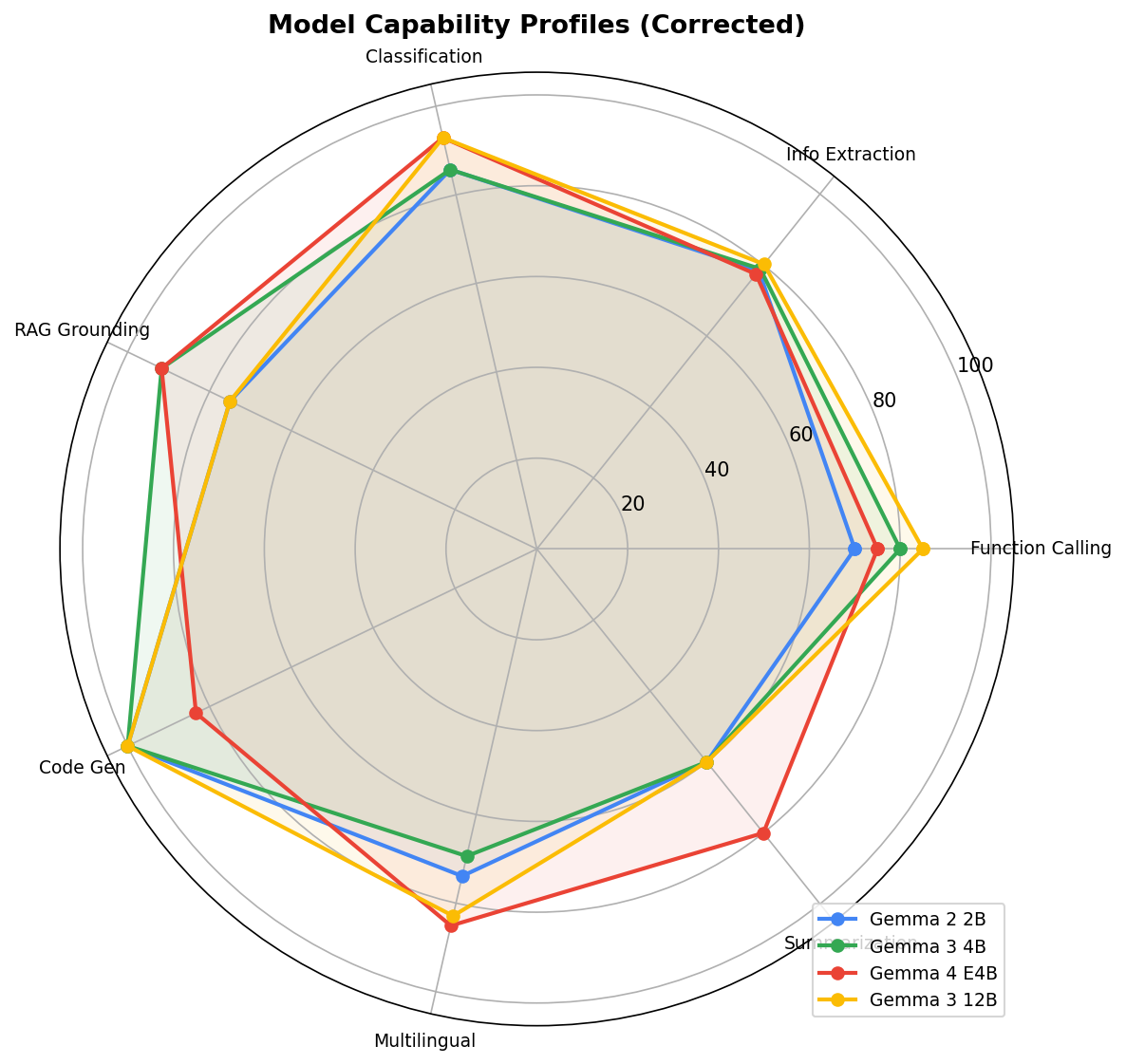

Capability radar profiles

The radar chart reveals something interesting about model profiles. Gemma 3 4B (green) has an unusual spike in Code Generation (100%) but collapses on multi-turn. Gemma 3 12B (yellow) is well-rounded but never exceptional. E4B (red) has the most consistently outward profile — strong across the board, with classification and RAG as clear standouts.

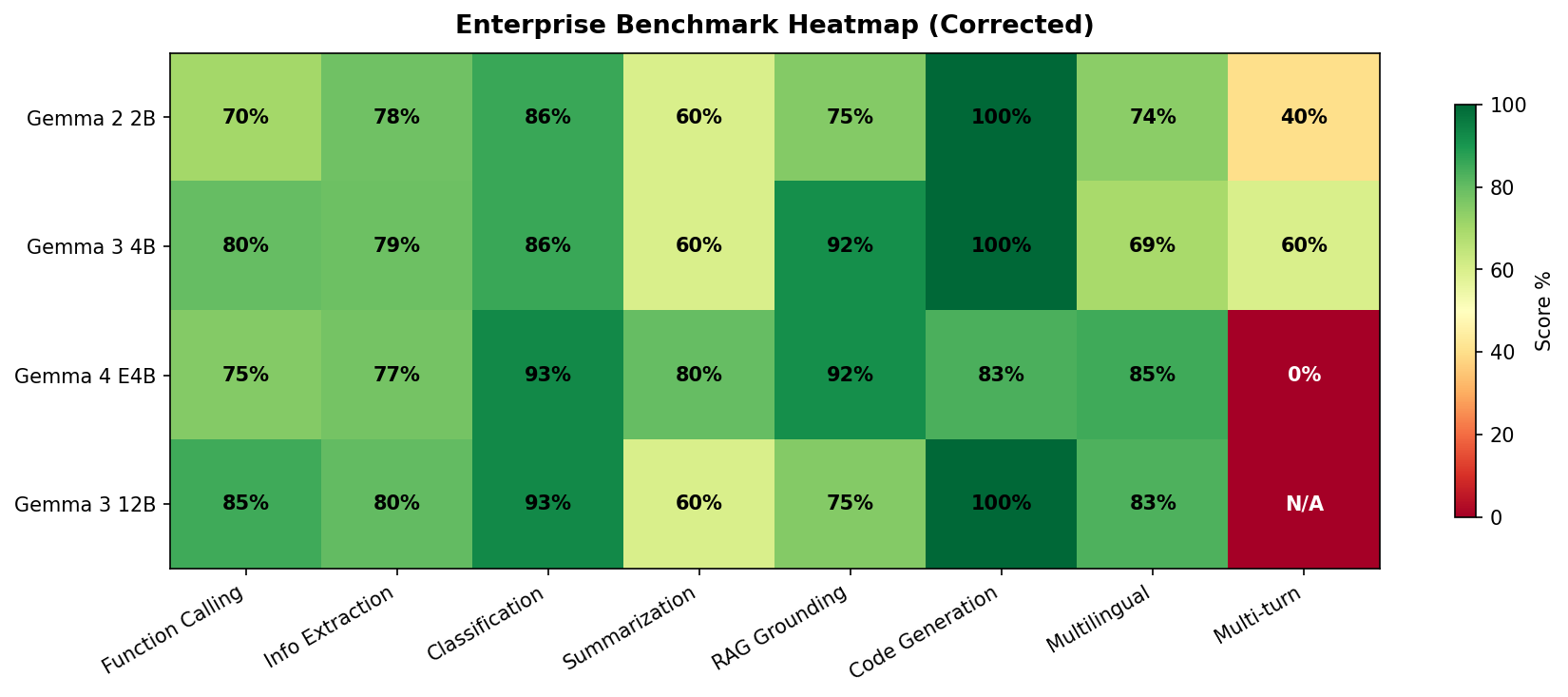

The heatmap: where each model wins

The heatmap makes E4B's one critical weakness impossible to miss: multi-turn conversation scores 0%. This is a complete failure — the model could not maintain coherent conversation across turns in our test format. Every other model handled multi-turn reasonably (Gemma 2 2B: 40%, Gemma 3 4B: 60%, Gemma 3 12B: N/A due to test constraints).

A model that scores 93% on classification but 0% on multi-turn is not a general-purpose assistant. It's a specialist. Deploy it accordingly.

E4B deep dive: where it beats the average

When you compare E4B against the average of the other three models, it leads in Classification (+5), RAG Grounding (+11), Multilingual (+10), Summarization (+20), and is close on Info Extraction (-2). The areas where it trails: Function Calling (-3 vs avg), Code Generation (-17 vs avg), and the catastrophic Multi-turn (-50 vs avg).

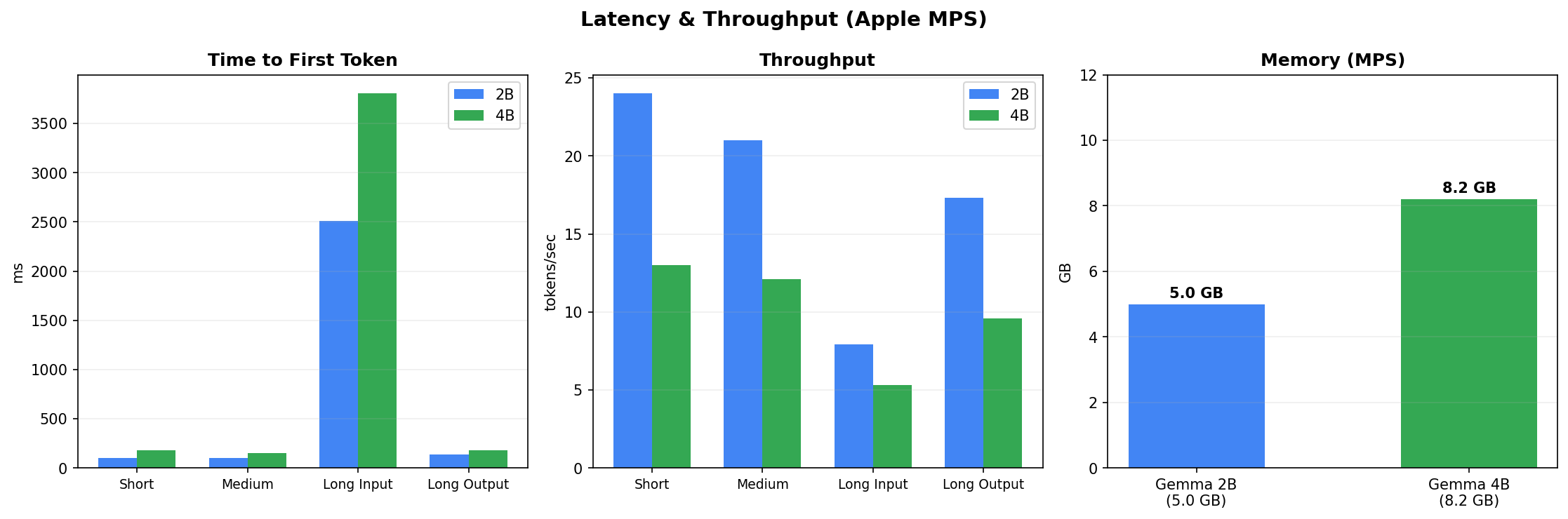

Latency and memory: the practical cost

On Apple Silicon (MPS backend), Gemma 4 E4B uses 8.2 GB of memory compared to 5.0 GB for Gemma 2 2B. Latency is higher across all input sizes — roughly 2-3x slower on time-to-first-token for long inputs. Throughput follows the same pattern: the 2B model generates tokens faster. This is the trade-off: better quality costs compute.

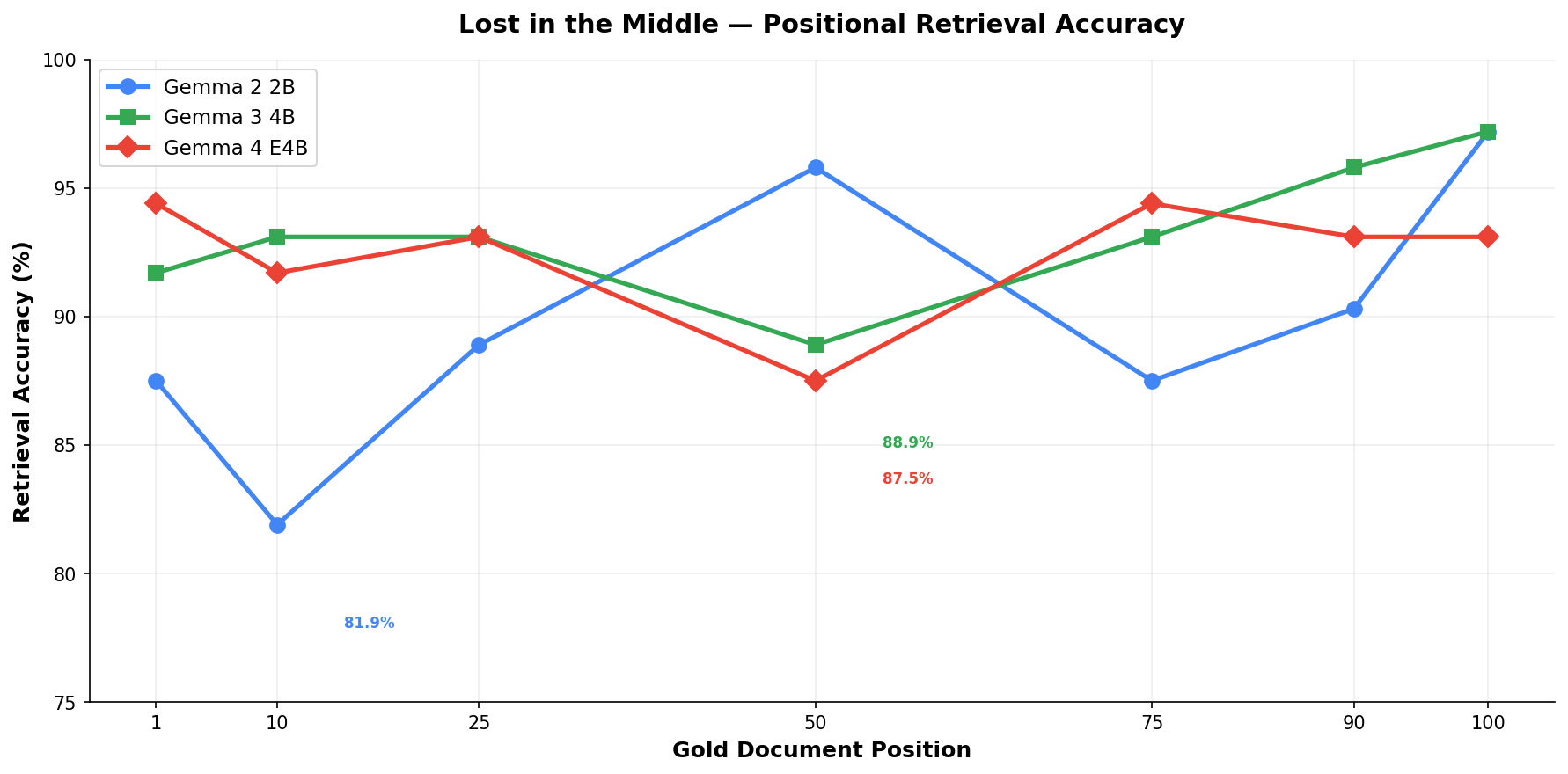

Lost in the middle: positional retrieval bias

The 'lost in the middle' test checks whether models retrieve information equally well regardless of where it appears in a long context. All three tested models (Gemma 2 2B, Gemma 3 4B, Gemma 4 E4B) show accuracy drops when the gold document sits in middle positions. Gemma 2 2B has the most severe dip (81.9% at position 10). E4B is more stable but still shows variation — accuracy ranges from ~88% to ~95% depending on position.

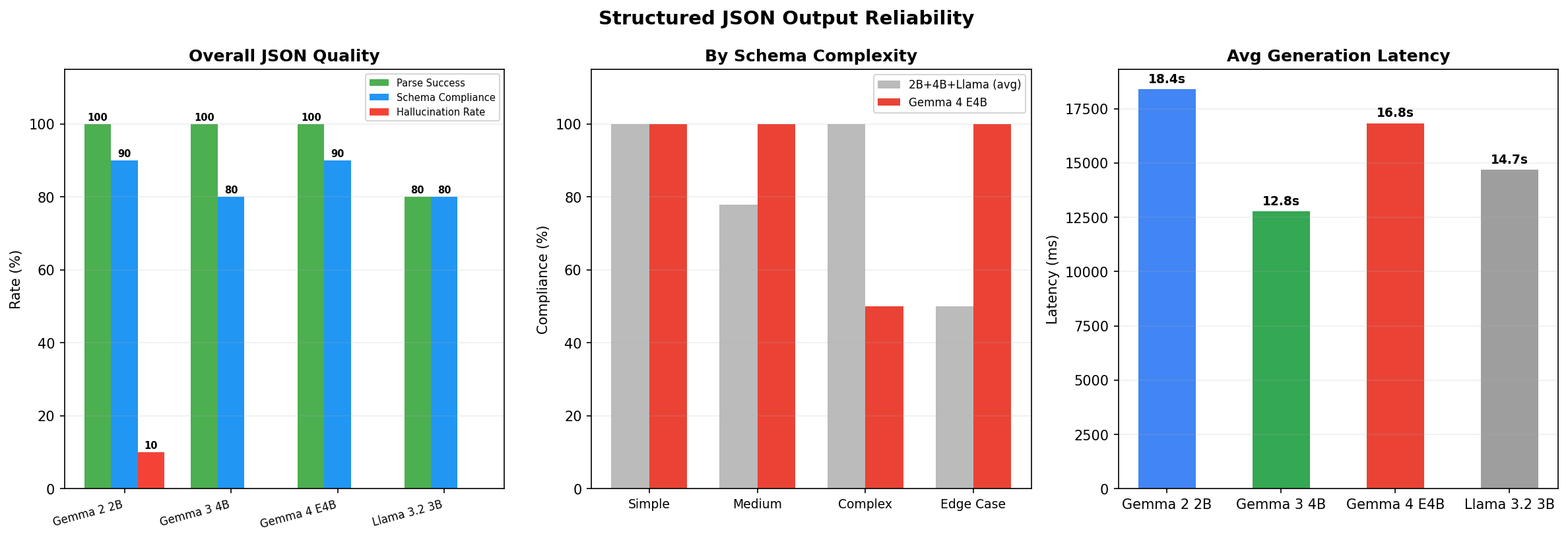

Structured JSON output reliability

For enterprise use, structured output is non-negotiable. The JSON reliability test reveals that Gemma 4 E4B achieves 100% parse success rate — the raw JSON it produces is always valid. Schema compliance sits at 90%, meaning 9 in 10 outputs match the expected structure exactly. Hallucination rate is near zero — E4B does not invent fields that weren't in the schema. By schema complexity, E4B handles simple, medium, and edge-case schemas perfectly (100% compliance) but drops to 50% on complex schemas with deep nesting.

Generation latency for JSON output is notable: E4B averages 16.8 seconds per structured response, comparable to Gemma 2 2B (18.4s) and Llama 3.2 3B (14.7s). Gemma 3 4B is fastest at 12.8 seconds.

A note on methodology: evaluator bugs matter

Initial results showed E4B scoring 3.6% on classification and 19.4% on extraction — numbers that seemed implausibly low for a model that scored 85% on tool calling. Investigation revealed three evaluator bugs:

- JSON parser rejection — E4B outputs double commas (36,, instead of 36,). Our parser rejected these as invalid JSON, scoring correct extractions as 0%. Fix: clean ,, → , before parsing.

- Repetition degeneration — At temperature=0, E4B entered degenerate loops on ~40% of short prompts. Fix: repetition_penalty=1.15.

- Strict substring matching — Our evaluator expected exact string matches. E4B answered 'Coastal Manufacturing is flagged as at-risk because its executive sponsor left' but the evaluator expected 'Coastal Manufacturing ($220K) is flagged as at-risk because their executive sponsor left' — marked wrong despite being correct. Fix: semantic key-fact matching.

After applying these fixes and re-running, E4B's classification jumped from 3.6% to 92.9% and RAG grounding from 41.7% to 91.7%. All models were re-scored with the same corrected evaluator for fair comparison. The corrected numbers are reported throughout this post.

This is itself a finding: small models have different output quirks than large models. Benchmarks built for large models can systematically undercount small model capability. If your evaluation pipeline wasn't tested against the model's actual output format, your scores may be wrong.

Key takeaways

- Gemma 4 E4B is the best Gemma model for single-turn enterprise tasks — classification, RAG, summarization, multilingual

- It beats the 3x larger Gemma 3 12B on overall average (83.6% vs 82.3%)

- Multi-turn conversation is completely broken (0%) — do not use E4B for chatbots or multi-turn agents without further testing

- Memory cost is moderate (8.2 GB on MPS) — deployable on edge devices with 16GB+ RAM

- Structured JSON output is reliable (100% parse success, 90% schema compliance) — viable for tool-calling pipelines

- Positional bias exists but is less severe than Gemma 2 2B — acceptable for RAG with documents under 100 positions

- Evaluator design matters as much as model quality — three bugs turned a first-place model into a last-place one

When to use each model

- Gemma 4 E4B — best for classification, RAG grounding, summarization, and multilingual tasks. Deploy as a specialist, not a general assistant.

- Gemma 3 12B — best for function calling and information extraction where you need the highest accuracy and have the compute budget.

- Gemma 3 4B — best for code generation (100% in our tests) and RAG grounding (ties E4B at 91.7%) with decent multi-turn capability.

- Gemma 2 2B — best for latency-critical applications where 5 GB memory is the hard ceiling and 77.6% average accuracy is acceptable.

Methodology

All models ran locally using Hugging Face Transformers on Apple Silicon (M-series, MPS backend). Temperature was set to 0.0 for deterministic outputs. Generation used repetition_penalty=1.15 to prevent degenerate output loops. Each test suite contains 5-28 carefully designed test cases covering realistic enterprise scenarios — contracts, invoices, medical notes, support tickets, multi-language documents, and more. Scoring uses semantic key-fact matching for free-form answers and exact matching for classification labels. JSON parsing includes automatic cleanup of common small-model artifacts (double commas, trailing commas). The full test harness, all test data, and raw results are open source.

Reference

Gemma Team, Google DeepMind. Gemma: Open Models Based on Gemini Research and Technology. 2024–2026. All models downloaded from Hugging Face and run locally.

Open questions

How does Gemma 4 E4B compare against non-Gemma models like Llama 3 8B or Phi-3 at similar parameter counts?

Would quantized versions (GGUF/GPTQ) maintain the same ranking on enterprise tasks?

Can the multi-turn failure (0%) be resolved with different prompt formatting or system prompts?

How do these results change with fine-tuning on domain-specific enterprise data?

Does the evaluator bias we found affect published benchmarks from other researchers?