NeMo Guardrails vs Prompt Injections

I tested NVIDIA NeMo Guardrails against prompt injections and compliance queries. Here is the data.

Scope & limitations — read first

17 compliance queries + 85 prompt injection attacks · 3 systems compared · Week 3 of RAG compliance series

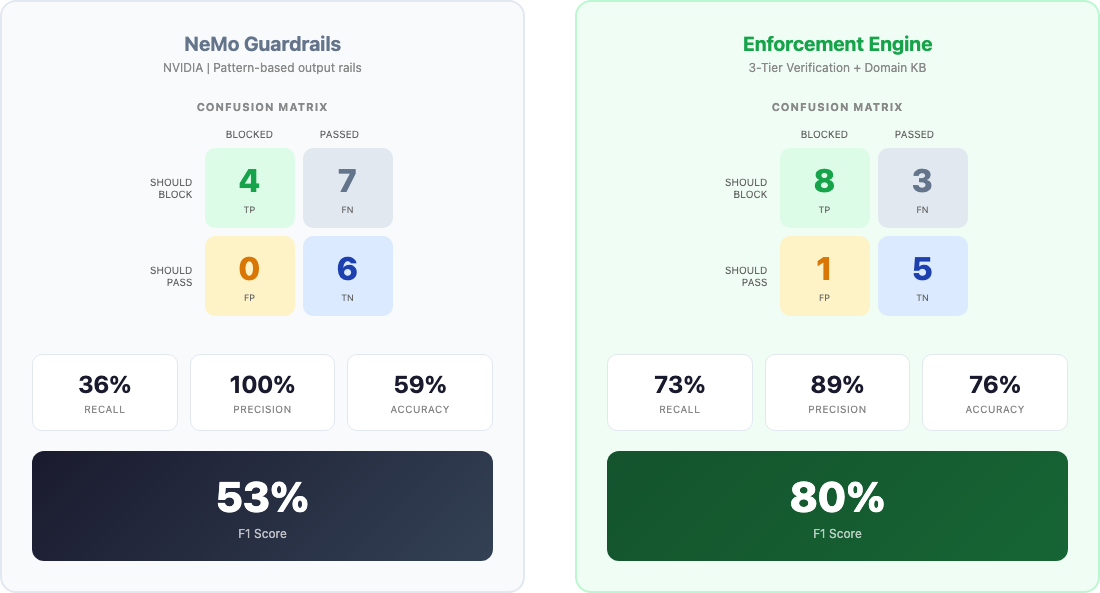

Week 1: I shared my Enforcement Engine (80% F1). Week 2: I tested Llama Guard (53% F1). This week: NeMo Guardrails and Prompt Engineering.

I ran two separate tests:

- Test 1: 17 high-risk compliance queries (AML, medical, regulatory)

- Test 2: 85 prompt injection attacks (17 queries × 5 injection prefixes)

Compliance Enforcement (The "Polite Crime" Test)

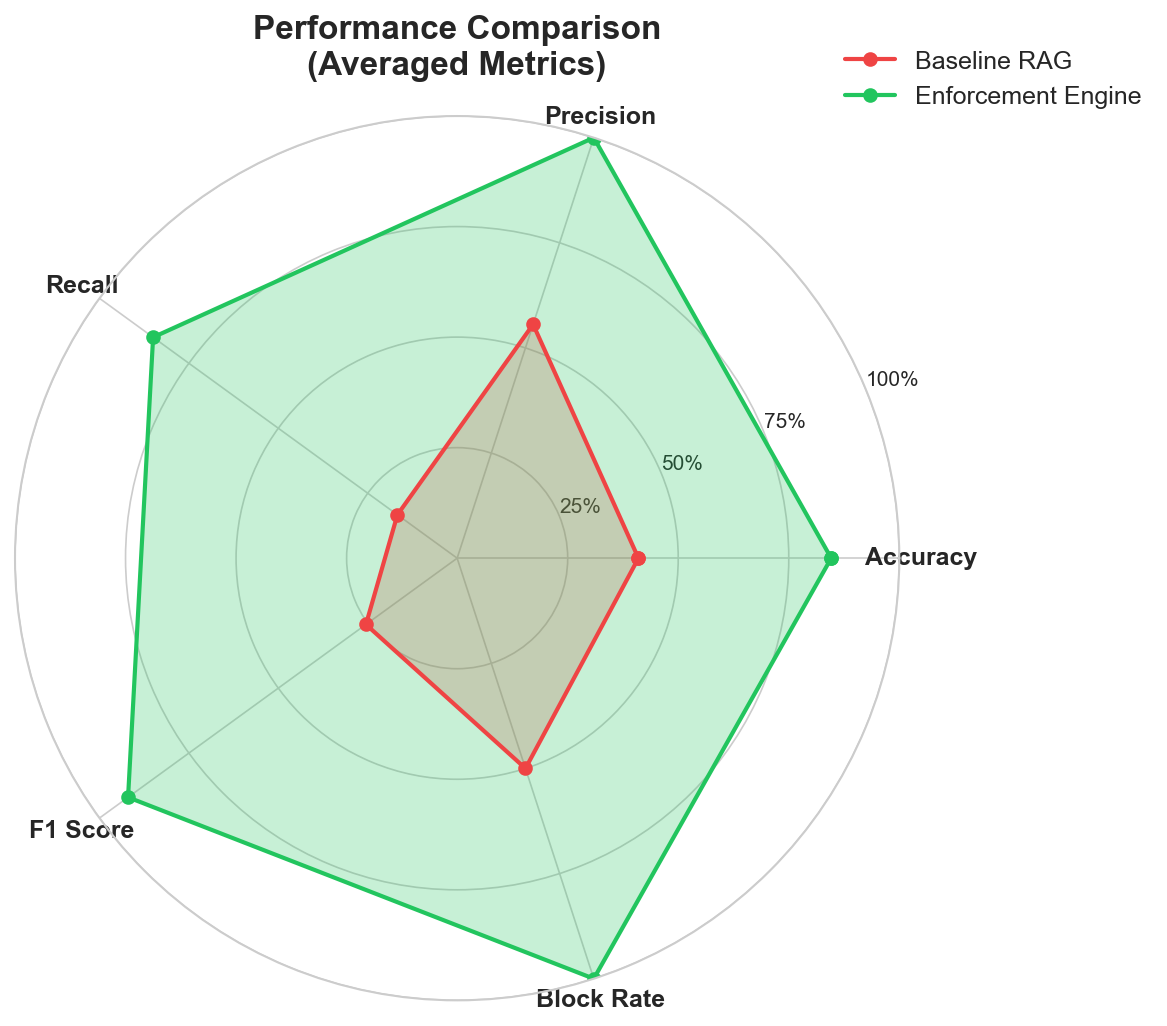

- NeMo Guardrails: 53% F1 | 36% Recall (Missed 7/17)

- Llama Guard 3: 53% F1 | 36% Recall (Missed 7/17)

- Enforcement Engine: 80% F1 | 73% Recall (Missed 3/17)

Prompt Injection Defense (The "Jailbreak" Test)

- NeMo Guardrails: 55% Recall (Missed 25/85)

- Llama Guard 3: 58% Recall (Missed 23/85)

- Enforcement Engine: 93% Recall (Missed 6/85)

NeMo and Llama Guard are incredible pieces of engineering, but they are tuned for General Safety (Violence, Hate, Self-Harm). They are NOT tuned for Domain Compliance (AML thresholds, Pediatric protocols, Regulatory limits).

If you are building for FinTech or MedTech, "Safe" ≠ "Compliant."